Summarize and analyze this article with

Your Lakehouse is doing more than anyone planned for. Bronze, silver, and gold pipelines are multiplying. DBU spend is climbing faster than revenue. Delta Live Tables, Workflows, Structured Streaming jobs, MLflow experiments, and Unity Catalog assets are all producing signals — and most observability tools were never built to read them. Before you add another product to the Lakehouse, here is what actually matters.

1. Why This Platform Changes the Observability Conversation

Why Databricks Observability Is Different

Databricks is not a warehouse, a data lake, or a pipeline engine alone. It is a unified Lakehouse where all three live together — with compute elasticity, Delta Lake transactional storage, Unity Catalog governance, Delta Live Tables (DLT) declarative pipelines, Workflows orchestration, Structured Streaming, MLflow, the Feature Store, Vector Search, and Model Serving layered into one platform. That breadth is the value. It is also why generic database observability fails here.

Issues on Databricks rarely originate in a single table. A silently failing Auto Loader stream lands a partial bronze file, the downstream DLT pipeline runs to completion without flagging an expectation violation because the rule was never added, the silver table updates with 40 percent fewer rows, a gold aggregation under-reports revenue, a dashboard shows a number that looks plausible, and an ML feature table retrains on corrupted inputs. By the time anyone asks why the KPI moved, the broken pipeline has already run three more times and burned DBUs each time. Lakehouse failures are cross-layer failures — and they demand observability that sees every layer.

The tool you pick will sit inside your Lakehouse, read its most sensitive metadata, consume its compute, and shape how your team spends every incident hour. Choosing the wrong one is expensive — not just in license fees, but in DBUs, engineer time, and eroded trust in data.

2. What Observability Actually Has to Cover

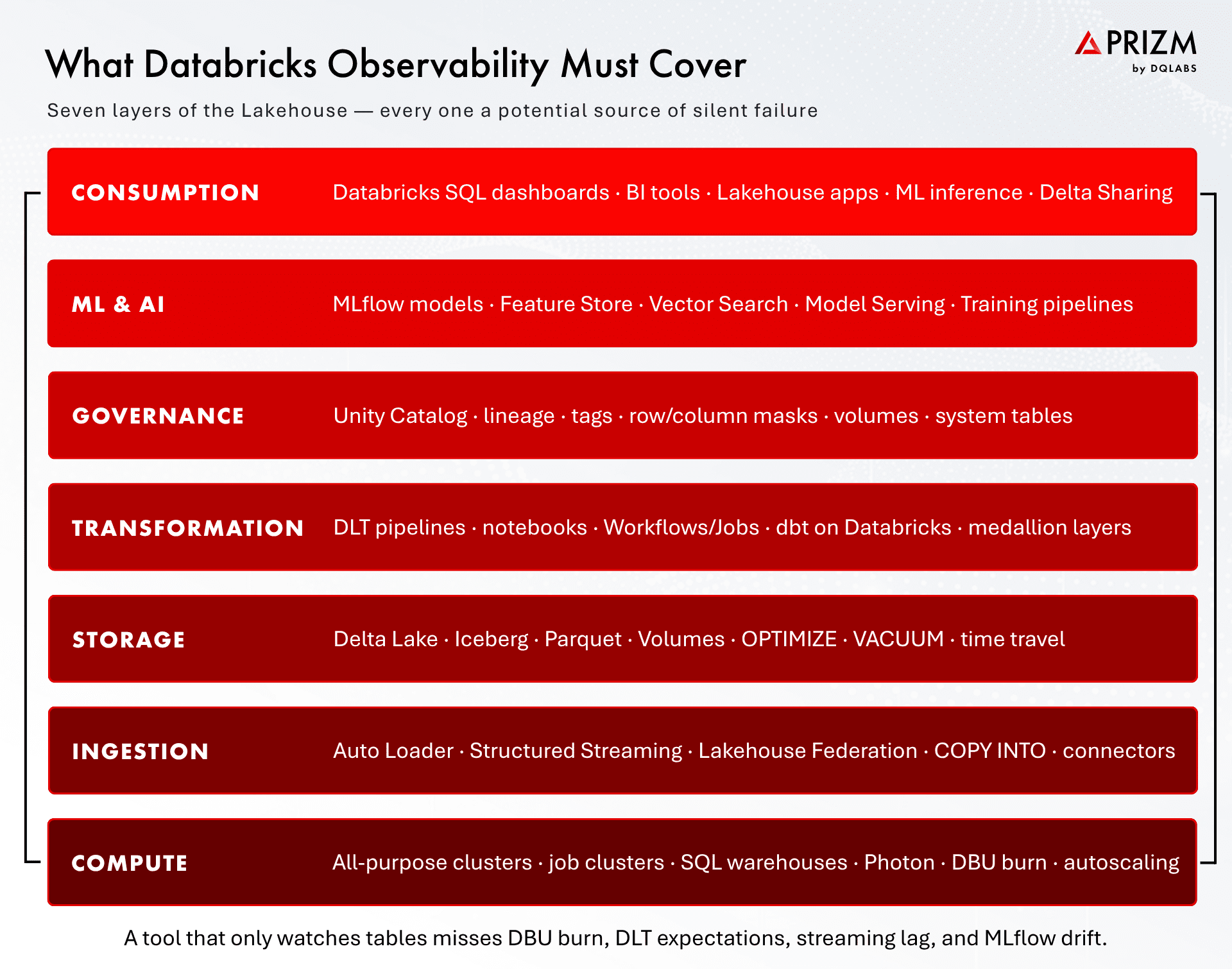

The Seven Layers You Must Observe on Databricks

Serious Databricks observability covers seven layers: compute, ingestion, storage, transformation, governance, ML/AI, and consumption. Miss one and you own a blind spot that will produce the next incident.

2.1 Compute and DBU Burn

All-purpose clusters, job clusters, and SQL warehouses are where DBU spend lives. Queue time, autoscaling thrash, Photon utilization, idle warehouses, and runaway notebooks are all compute-layer signals. An observability tool that ignores cluster telemetry is monitoring half the platform — and the half your CFO cares about most.

2.2 Ingestion and Streaming

Auto Loader, Structured Streaming, Lakehouse Federation, and COPY INTO jobs are the front door. Streaming observability specifically is underserved: watermark lag, trigger interval drift, checkpoint health, input-rate anomalies, and backpressure all cause silent data loss long before any downstream table looks wrong.

2.3 Delta Lake Storage

Delta tables carry rich metadata — transaction history, OPTIMIZE and VACUUM cadence, Z-ORDER clustering, file-size distribution, and time-travel checkpoints. A tool that cannot read Delta history cannot distinguish a real anomaly from a scheduled compaction, and cannot use time travel to reconstruct what the data looked like when the incident started.

2.4 Transformation: DLT, Workflows, Notebooks, dbt

This is the connective tissue. DLT expectations tell you when a declarative pipeline has seen bad data. Workflow run metadata tells you when a Job failed, retried, or drifted in duration. Notebook-based pipelines and dbt-on-Databricks projects introduce their own lineage and test signals. Real observability reads all four — and ties every failure back to the downstream assets it affects.

2.5 Unity Catalog Governance

Unity Catalog is the governance backbone. System tables, lineage views, tags, row-level and column-level masks, and volumes are the truth about what exists, who uses it, and what is sensitive. Observability that integrates natively with Unity Catalog inherits governance; observability that ignores it either duplicates metadata or, worse, exposes masked values in alerts and samples.

2.6 ML, MLflow, and the Feature Store

ML reliability is now data reliability. Feature drift, training-serving skew, silent schema changes in input features, and model registry lineage are observability concerns, not ML ops concerns. Teams that separate the two end up with two different tools, two different sources of truth, and two different blind spots.

2.7 Consumption

Databricks SQL dashboards, BI tools, Delta Sharing recipients, Lakehouse apps, and model serving endpoints are what the business actually touches. Lineage that stops at the gold layer misses the last mile — which is exactly where stakeholders notice something is wrong.

If a tool you are evaluating talks only about tables, ask what it does about DBUs, DLT expectations, streaming watermarks, Unity Catalog lineage, and MLflow. The answer tells you whether you are buying observability or just another alert source.

3. The Hidden Cost Problem

The DBU Problem: Cost-Aware Observability

Here is a scenario most Databricks teams have lived through. A new observability tool starts profiling every table it can find. Deep statistical checks — distribution, uniqueness, correlation, pattern analysis — run on thousands of Delta tables on a nightly schedule. Dashboards fill with metrics. Six weeks later, someone in FinOps asks why DBU spend is up thirty percent. The answer, almost always, is the observability tool.

Generic monitoring runs the same checks on every asset because it has no way to tell which ones matter. A gold fact table feeding the executive revenue dashboard gets the same treatment as a long-forgotten bronze staging table from a 2022 migration nobody has queried in a year. Both pay the same DBU tax — and the long tail of low-value assets, often 60 to 80 percent of the catalog, silently consumes the majority of your observability budget.

Criticality-aware profiling is the fix. A modern Databricks observability tool should score every asset on usage patterns, upstream and downstream lineage depth, freshness expectations, consumer count, and governance tags — then use that score to drive check depth. Critical gold tables get deep statistical profiling and tight SLAs. Bronze staging and analyst sandboxes get lightweight polling or none at all. Done well, this reduces observability-driven DBU burn by 40 to 70 percent while concentrating signal on assets that actually matter to the business.

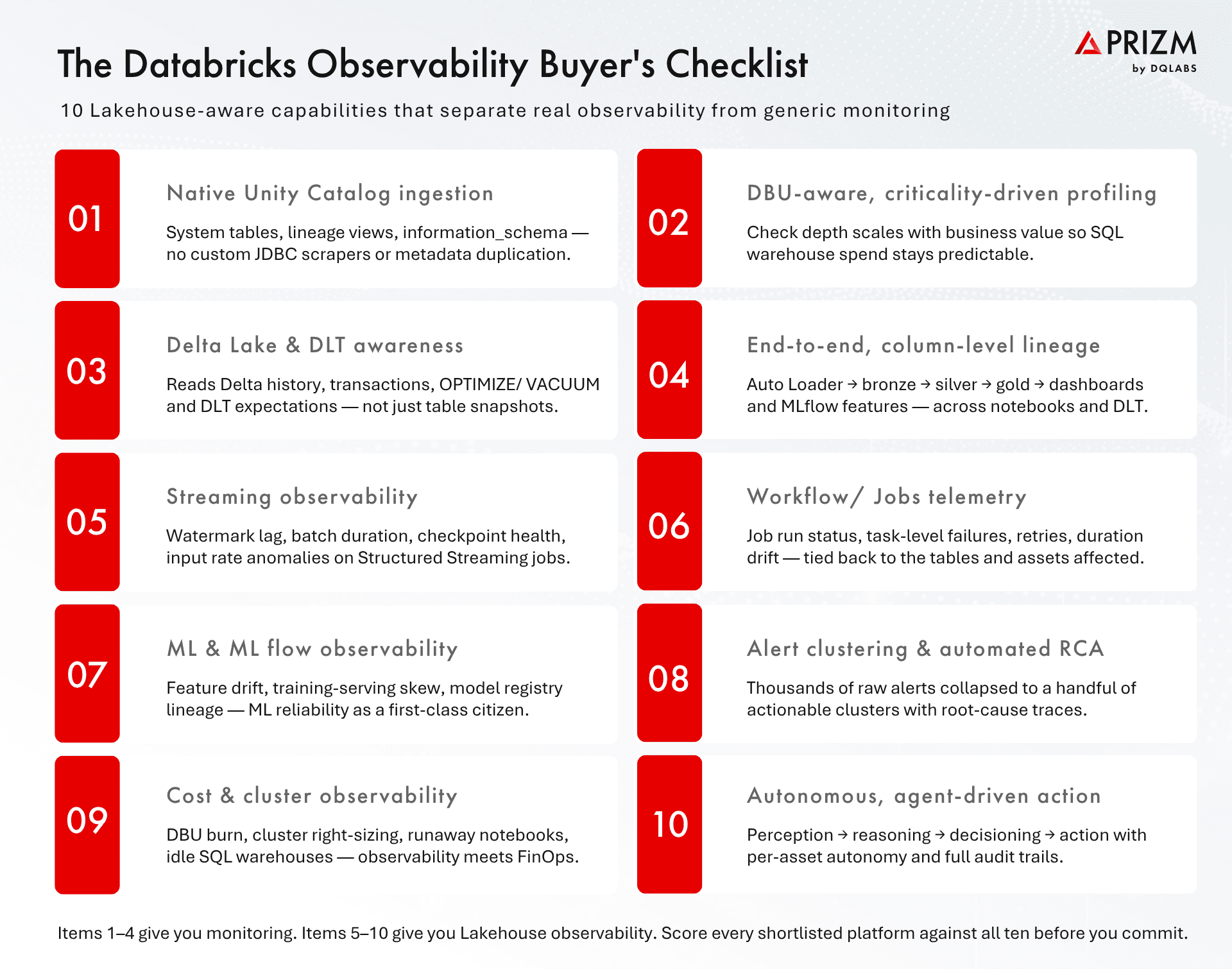

4. The Buyer’s Checklist — 10 Capabilities

The Capabilities That Matter

The ten capabilities below are the ones to bring to every vendor conversation. The gap between a tool that delivers the first four and a tool that delivers all ten is the gap between another alert fire hose and true operational leverage.

Native Unity Catalog ingestion — system tables, lineage views, information_schema, not custom JDBC scrapers.

DBU-aware, criticality-driven profiling — check depth scales with asset value so Lakehouse compute stays predictable.

Delta Lake and DLT awareness — reads Delta history, OPTIMIZE/VACUUM activity, and DLT expectation outcomes, not just table snapshots.

End-to-end, column-level lineage — Auto Loader through bronze, silver, gold, dashboards, and MLflow features — across notebooks, DLT, and dbt.

Streaming observability — watermark lag, batch duration, checkpoint health, and input-rate anomalies on Structured Streaming jobs.

Workflow and Jobs telemetry — run status, task-level failures, retry patterns, and duration drift tied back to affected assets.

ML and MLflow observability — feature drift, training-serving skew, and model registry lineage as first-class signals.

Alert clustering and automated root cause analysis — thousands of raw signals collapsed into a handful of actionable clusters.

Cost and cluster observability — DBU burn, right-sizing, runaway notebooks, idle SQL warehouses — observability meets FinOps.

Autonomous, agent-driven action — perception, reasoning, decisioning, and action agents with per-asset autonomy controls and full audit trails.

5. Red Flags in Databricks Observability Evaluations

Some things you will hear in vendor demos are worth treating as warnings.

5.1 “Just write a SQL expectation and we will run it.”

If the core value proposition is that your team defines every rule by hand, you are buying a rule engine. Rules do not scale past a few hundred assets. Modern observability learns baselines on thousands of assets automatically.

5.2 “We connect to Databricks via JDBC.”

Generic JDBC means the tool treats Databricks as a commodity SQL engine. It will not read Unity Catalog lineage, Delta history, DLT expectations, or system tables — and it will miss everything Lakehouse-native.

5.3 “Our ML models work perfectly on day one.”

Real anomaly detection needs historical data to build seasonality-aware baselines. A tool that claims perfect detection out of the box is either running static thresholds or preparing you for false positives at scale.

5.4 “We only read metadata — zero cost impact.”

Read-only metadata access is cheap, but meaningful profiling — distribution, statistics, uniqueness — runs queries against your clusters and warehouses. Ask exactly what queries run, how often, against which clusters, and what the expected DBU impact is for a catalog your size.

5.5 “You can always build a custom Python connector.”

Every connector you build yourself is glue code your platform team owns forever. Real tools integrate natively with Unity Catalog, DLT, Workflows, MLflow, and the rest of the Databricks surface area — with documentation, maintained by the vendor.

6. How Prizm Approaches Data Observability for Databricks

Prizm by DQLabs is an AI-native, self-driving platform for data observability, quality, and context — with Databricks as a first-class environment. Rather than retrofitting generic monitoring onto the Lakehouse, Prizm is designed from the metadata up to understand how Databricks actually works.

6.1 Native Lakehouse Metadata, Not Scrapers

Prizm ingests directly from Unity Catalog system tables, lineage views, information_schema, Delta transaction history, DLT pipeline metadata, Workflow run outputs, and MLflow tracking — combining them with metadata from dbt, BI tools, and the rest of the surrounding stack. Every signal is tied back to a single, unified asset graph.

6.2 Criticality-Driven Profiling That Protects DBU Spend

Every asset is automatically scored on four dimensions — operational (volume, freshness), usage (query frequency, distinct consumers), lineage depth (upstream and downstream dependencies), and governance (tags, ownership, sensitivity). That score drives the depth of profiling applied to each asset. Critical gold tables get deep statistical checks, distribution drift detection, and tight SLAs; bronze staging and sandbox tables get lightweight polling or none at all. Customers typically see Lakehouse observability spend stay flat even as their Unity Catalog grows into the tens of thousands of assets.

6.3 Every Layer, One Pane of Glass

Prizm observes data (freshness, volume, schema, completeness, distribution, latency), pipelines (DLT expectations, Workflow runs, streaming lag, dbt tests), cost (DBU burn, cluster utilization, SQL warehouse idle time, runaway queries), usage (who queries what, how often, from which tools), and ML (feature drift, training-serving skew, model lineage). One platform, one asset graph, one set of alerts — no tool-switching during incidents.

6.4 Multi-Agent Autonomy with Human-in-the-Loop

A Perception agent detects issues. A Reasoning agent correlates thousands of raw signals into a handful of actionable clusters, traces root cause through column-level lineage, and quantifies blast radius. A Decisioning agent selects the right response under per-asset autonomy rules. An Action agent either executes the fix or presents a one-click-approve recommendation — with every action audited. Teams set autonomy per asset class: full auto for low-risk cleanup, recommend-only for production pipelines, review-required for anything touching finance or regulated data.

6.5 Outcomes That Compound

In production Lakehouse environments, Prizm customers see 99.8 percent alert noise reduction, 60 percent faster mean time to resolution, and zero critical issues missed — without the DBU burn that comes from running deep profiling on everything. The result is not just fewer incidents, it is a data team that stops firefighting and starts building.

7. Frequently Asked Questions

What is data observability for Databricks?

Data observability for Databricks is the continuous monitoring of metadata, data quality, pipeline health, cost, and usage across every layer of the Lakehouse — compute (clusters, DBUs, SQL warehouses), ingestion (Auto Loader, Structured Streaming), Delta Lake storage, transformation (DLT, Workflows, notebooks, dbt), Unity Catalog governance, MLflow and Feature Store, and consumption (dashboards, Delta Sharing, apps). It uses AI and lineage-aware intelligence to detect, diagnose, and resolve issues before they reach business users.

How is Databricks observability different from general data observability?

Databricks is a Lakehouse, not a warehouse. It has unique surfaces — Unity Catalog, Delta Lake, DLT expectations, Structured Streaming, Workflows, MLflow, the Feature Store — that require native, metadata-level integration. Generic tools treating Databricks as a JDBC SQL endpoint miss DBU burn, pipeline expectations, streaming lag, model drift, and Unity Catalog lineage. True Databricks observability ingests these natively.

Why does criticality-aware profiling matter for Databricks?

Because profiling consumes DBUs. A tool that runs deep statistical checks on every asset — including thousands of low-value bronze staging tables and analyst sandboxes — can increase Databricks compute spend by 30 percent or more without adding proportional signal. Criticality-aware profiling scales check depth to each asset’s business importance and typically cuts observability-driven DBU burn by 40 to 70 percent.

Should a Databricks observability tool monitor DBU cost?

Yes. Cost and data reliability are two sides of the same coin on the Lakehouse. A runaway notebook, an idle SQL warehouse, an over-provisioned all-purpose cluster, and a DLT pipeline rerunning on dirty data all show up in the same observability workflow. Tools that monitor data and cost together give your FinOps and data teams one source of truth.

How does observability handle Structured Streaming on Databricks?

Real streaming observability tracks watermark lag, trigger interval drift, batch duration, checkpoint health, input-rate anomalies, and backpressure — then correlates streaming-layer issues with downstream bronze, silver, and gold table impacts. Static row-count checks cannot catch silent streaming failures; seasonality-aware, lineage-integrated observability can.

Does a Databricks observability tool need to respect Unity Catalog governance?

Absolutely. The tool must honor row-level and column-level masking, tag-based policies, and volume access when sampling data, writing documentation, or surfacing alerts. Never expose masked or sensitive values. Tools that integrate natively with Unity Catalog turn observability into a governance accelerator; tools that bypass it introduce new risk.

What does autonomous observability look like on Databricks?

A multi-agent system — perception, reasoning, decisioning, action — that continuously detects issues across Unity Catalog assets, correlates signals into root-cause clusters using column-level lineage, and either executes a fix or presents a one-click-approve recommendation to a human reviewer. Per-asset autonomy controls let teams set full automation for low-risk cleanup and review-required for anything touching finance, compliance, or regulated data. Every action is audited end-to-end.

8. Bringing It Together

Databricks is now the backbone of most modern data and AI organizations. Every dashboard the executive team trusts, every feature the ML team ships, every customer experience powered by Lakehouse data — all of it runs here. Observability is not a checkbox on the platform roadmap. It is the layer that determines whether the rest of the stack is worth trusting.

The right tool turns that trust into leverage. It gives engineers back their mornings, stewards a clear view of what is working, executives confidence in the numbers, and FinOps a real handle on DBU spend. The wrong tool becomes another alert pipeline nobody reads, another line item on the bill, another project deprioritized after eighteen months.

Prizm by DQLabs delivers AI-native, self-driving data observability, quality, and context for Databricks — from native Unity Catalog integration to autonomous agent-driven resolution, with criticality-aware profiling that keeps DBU spend predictable as your Lakehouse grows. Talk to our team to see how Prizm handles a Lakehouse the size of yours.

Claude

Claude ChatGPT

ChatGPT Perplexity

Perplexity Grok

Grok Google AI

Google AI